Jimmyjames

Elite Member

- Joined

- Jul 13, 2022

- Posts

- 1,507

This video has been shared fairly widely and I thought it might make for an interesting discussion. I’ll preface this thread by stating that much of this is over my head and I”m trying to understand it, so I may be incorrect in my statements.

Here is the video:

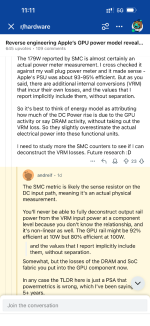

Here is a screenshot from the conclusion:

Essentially, the video posits the idea that Apple’s power measurement tools and apis are incomplete and based on a model rather than measurements. The author noticed a discrepancy between wall power measurement and software measurement. It amounted to a significant power difference. After some analysis, they say that Apple’s model doesn’t account for data movement when stating GPU power. It seems that data movement is increasingly expensive with modern GPUs.

My uneducated question is: does that make sense as a critique of the power measurement that Apple provides? That is to say, I wonder if part of the problem is that in a UMA like Apple Silicon, delineating what constitutes "gpu" power or "cpu" power is a judgement. Certainly the cost of moving data is important in terms of overall power consumption, but isn't it just as likely that Apple judges gpu power as only the alu/computation that takes place within the gpu itself? If so, while Apple should do a better job of accounting for residual power, is it clearly part of a GPU power measurement?

I would value input on this as I stated, much of this is currently beyond my knowledge.

Edit. It seems that the video has to be viewed on YouTube directly. The creator having disabled viewing elsewhere. Annoying.

Here is the video:

Here is a screenshot from the conclusion:

Essentially, the video posits the idea that Apple’s power measurement tools and apis are incomplete and based on a model rather than measurements. The author noticed a discrepancy between wall power measurement and software measurement. It amounted to a significant power difference. After some analysis, they say that Apple’s model doesn’t account for data movement when stating GPU power. It seems that data movement is increasingly expensive with modern GPUs.

My uneducated question is: does that make sense as a critique of the power measurement that Apple provides? That is to say, I wonder if part of the problem is that in a UMA like Apple Silicon, delineating what constitutes "gpu" power or "cpu" power is a judgement. Certainly the cost of moving data is important in terms of overall power consumption, but isn't it just as likely that Apple judges gpu power as only the alu/computation that takes place within the gpu itself? If so, while Apple should do a better job of accounting for residual power, is it clearly part of a GPU power measurement?

I would value input on this as I stated, much of this is currently beyond my knowledge.

Edit. It seems that the video has to be viewed on YouTube directly. The creator having disabled viewing elsewhere. Annoying.