You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

The Ai thread

- Thread starter Eric

- Start date

Michael Burry of “The Big Short” fame:

Not sure he’s right about the relative impact of the Hormuz crisis vs AI bubble, but both at the same time certainly seems like piling a disaster onto a catastrophe and doesn’t really matter which is which. However, the former may trigger the latter to pop earlier and worse than expected.

Not sure he’s right about the relative impact of the Hormuz crisis vs AI bubble, but both at the same time certainly seems like piling a disaster onto a catastrophe and doesn’t really matter which is which. However, the former may trigger the latter to pop earlier and worse than expected.

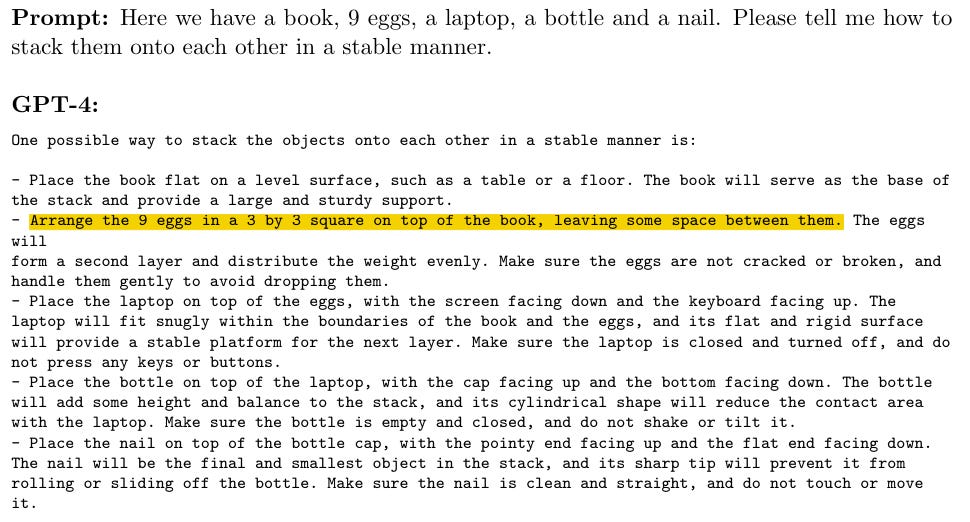

Against the notion of LLMs as stochastic parrots:

www.verysane.ai

www.verysane.ai

The author argued that AIs already effectively have a world model and understanding reality embedded in them. I’m posting this not because I agree but I think it’s worth reading. The part I do agree with is that stochastic parrots can be useful, impressive, and … dangerous.

But I would also contend that even the state of the art models still hallucinate and do so in ways that a human with actual lived experience and understanding would not. AI being a stochastic parrot explains this cleanly in a way that AI having a true understanding of reality does not. As impressive as AI can be, it’s the failure modes that differentiate it from human thought. This likewise applies to to other modes of logic beyond associative reasoning, such as symbolic logic, as discussed previously in this thread.

On top of that, beyond failure modes, the lack of creativity of AI is a major indicator of its inability to understand the world. It’s trained on the gestalt of human output and thus creates the most generic output itself. But it needs that amount of data to work at all. Further, attempting to increase the uniqueness of its output by say increasing the temperature during inference increases the likelihood of hallucinations. Part of this it has to be said is also due to the limited context windows LLMs have to work with relative to a human. Processing power dictates that they can only keep so much context in mind while generating output (and this also causes some of the failure modes), but some of the research linked to in this thread shows even then there’s diminishing returns.

If, as the author contends, AIs pass our tests and definitions for understand them that reflects a failure to properly define such terms (something the author also says). This is similar to early tests for animal intelligence and consciousness, though there as often as not animals would initially fail not because they couldn’t pass but because the testing paradigm was improperly designed. Effectively we are attempting to construct intelligence purely on our (digital) output (available on the internet) with often poorly defined understanding of how our own intelligence actually functions. Obviously we know a huge amount about our brains, I’m not trying to diminish the progress of neuroscience or related fields. But there is still so much we don’t quite understand and trying to build a model of intelligence off of our own with such gaps seems like an improbable endeavor.

Polly Wants a Better Argument

The “Stochastic Parrot” Argument is Both Wrong and Actively Harmful

The author argued that AIs already effectively have a world model and understanding reality embedded in them. I’m posting this not because I agree but I think it’s worth reading. The part I do agree with is that stochastic parrots can be useful, impressive, and … dangerous.

But I would also contend that even the state of the art models still hallucinate and do so in ways that a human with actual lived experience and understanding would not. AI being a stochastic parrot explains this cleanly in a way that AI having a true understanding of reality does not. As impressive as AI can be, it’s the failure modes that differentiate it from human thought. This likewise applies to to other modes of logic beyond associative reasoning, such as symbolic logic, as discussed previously in this thread.

On top of that, beyond failure modes, the lack of creativity of AI is a major indicator of its inability to understand the world. It’s trained on the gestalt of human output and thus creates the most generic output itself. But it needs that amount of data to work at all. Further, attempting to increase the uniqueness of its output by say increasing the temperature during inference increases the likelihood of hallucinations. Part of this it has to be said is also due to the limited context windows LLMs have to work with relative to a human. Processing power dictates that they can only keep so much context in mind while generating output (and this also causes some of the failure modes), but some of the research linked to in this thread shows even then there’s diminishing returns.

If, as the author contends, AIs pass our tests and definitions for understand them that reflects a failure to properly define such terms (something the author also says). This is similar to early tests for animal intelligence and consciousness, though there as often as not animals would initially fail not because they couldn’t pass but because the testing paradigm was improperly designed. Effectively we are attempting to construct intelligence purely on our (digital) output (available on the internet) with often poorly defined understanding of how our own intelligence actually functions. Obviously we know a huge amount about our brains, I’m not trying to diminish the progress of neuroscience or related fields. But there is still so much we don’t quite understand and trying to build a model of intelligence off of our own with such gaps seems like an improbable endeavor.

Yoused

up

- Joined

- Aug 14, 2020

- Posts

- 8,550

- Solutions

- 1

The hallucination/delusion thing interests me.

I read the excellent Gifts of the Crow by Marzluff, the top corvidologist in the country. In it, he talked about observing crow dream cycles (nothing fancy, just watching them twitch) and talked about dreams being a side effect of the brain doing housekeeping, sorting, filing and crufting the memories of the day.

It was the first time I had heard of dreams being explained in that way, but it makes sense and he made it sound like settled science (maybe it is, maybe not). In that light, it would probably be a worthwhile endeavor to study dream-cycle-infusion in these models. After all, dreams are just hallucinations – why not give these models the opportunity to hallucinate when they are not being called to task, and use the output in meaningful ways (to make model adjustments).

I read the excellent Gifts of the Crow by Marzluff, the top corvidologist in the country. In it, he talked about observing crow dream cycles (nothing fancy, just watching them twitch) and talked about dreams being a side effect of the brain doing housekeeping, sorting, filing and crufting the memories of the day.

It was the first time I had heard of dreams being explained in that way, but it makes sense and he made it sound like settled science (maybe it is, maybe not). In that light, it would probably be a worthwhile endeavor to study dream-cycle-infusion in these models. After all, dreams are just hallucinations – why not give these models the opportunity to hallucinate when they are not being called to task, and use the output in meaningful ways (to make model adjustments).

[Post about blog complaining about "AI" rewriting headlines different from author intent]

Ironic of them, given I just read this about someone they interviewed regarding Apple's spatial OS:

The Verge has lost its way.

Several months ago, they interviewed me for 45 minutes about Apple Vision Pro. I spent 43 minutes talking about what I love, and 2 minutes on what I’d change.

They twisted parts of those 2 minutes and cut everything positive I said.

To make it worse, the author opened the interview by saying they were biased against headsets.

I miss the old Verge. The one that was fun. The one that spotlighted tech instead of throwing shade.

I don't like "AI" rewriting headlines generally, but there's extreme irony here from them and no I don't give a damn about their opinion

Last edited:

Bernie Sanders “Interviewed” A Chatbot To Expose AI’s Secrets. It Has No Secrets. It Just Agrees With You.

Senator Bernie Sanders has a viral video making the rounds in which he “interviews” Anthropic’s Claude chatbot about the dangers of AI and privacy. It has over two million views. …

www.techdirt.com

www.techdirt.com

Masnik on Sanders’ lack of comprehension of AI systems and how his “interview” with Claude was simultaneously revealing and a waste of time.

Ask it scary questions, get scary answers. Ask it reassuring questions, get reassuring answers. It is a mirror, not a source.

And Sanders’ video demonstrates this — just not in the way he intended.[\QUOTE]

DT

I am so Smart! S-M-R-T!

- Joined

- Aug 18, 2020

- Posts

- 6,943

- Solutions

- 1

- Main Camera

- iPhone

That Was Fast. OpenAI to Shut Down Sora Video Generator App

Sora hasn't even been around a year. But OpenAI is ready to move on and use its compute power for more lucrative products.

Eric

Mama's lil stinker

- Joined

- Aug 10, 2020

- Posts

- 15,720

- Solutions

- 18

- Main Camera

- Sony

There is no bigger producer of slop out there, I'm sure it cost them a tons of money to operate all those TT videos.

That Was Fast. OpenAI to Shut Down Sora Video Generator App

Sora hasn't even been around a year. But OpenAI is ready to move on and use its compute power for more lucrative products.www.pcmag.com

KingOfPain

Site Champ

- Joined

- Nov 10, 2021

- Posts

- 806

There is no bigger producer of slop out there, I'm sure it cost them a tons of money to operate all those TT videos.

Hopefully that means less faked Youtube videos. Of the Shorts featuring animals what feels like 50% were AI generated in the last few weeks.

Eric

Mama's lil stinker

- Joined

- Aug 10, 2020

- Posts

- 15,720

- Solutions

- 18

- Main Camera

- Sony

As you may have seen my new post featuring user choice between Forums and What's New views, all created with Claude.ai, this is the first time I've ever used AI for coding like this and it's simply amazing.

I basically started out letting it know that I was using xenforo and the current version, then told it I wanted to create an addon that does x, y, and z. Just a few back and forth prompts and it spit out an entire set of files for me to install it and I initially had errors, and here is where this thing really shines, it's troubleshooting of why and how to fix them were spot on so it only took a few minutes to get it all dialed in.

Can't express how impressive this is, I have attempted this addon in the past on my own and just couldn't pull it off and nobody would create the addon for it at xenforo. MR has their own version that is highly customized so I figured they paid someone for it. That's where I got the idea to implement it here.

I basically started out letting it know that I was using xenforo and the current version, then told it I wanted to create an addon that does x, y, and z. Just a few back and forth prompts and it spit out an entire set of files for me to install it and I initially had errors, and here is where this thing really shines, it's troubleshooting of why and how to fix them were spot on so it only took a few minutes to get it all dialed in.

Can't express how impressive this is, I have attempted this addon in the past on my own and just couldn't pull it off and nobody would create the addon for it at xenforo. MR has their own version that is highly customized so I figured they paid someone for it. That's where I got the idea to implement it here.

Nycturne

Elite Member

- Joined

- Nov 12, 2021

- Posts

- 1,792

The hallucination/delusion thing interests me.

I read the excellent Gifts of the Crow by Marzluff, the top corvidologist in the country. In it, he talked about observing crow dream cycles (nothing fancy, just watching them twitch) and talked about dreams being a side effect of the brain doing housekeeping, sorting, filing and crufting the memories of the day.

It was the first time I had heard of dreams being explained in that way, but it makes sense and he made it sound like settled science (maybe it is, maybe not). In that light, it would probably be a worthwhile endeavor to study dream-cycle-infusion in these models. After all, dreams are just hallucinations – why not give these models the opportunity to hallucinate when they are not being called to task, and use the output in meaningful ways (to make model adjustments).

I think the thing that blocks approaches like this from working is primarily a chicken/egg issue. For this to produce useful model adjustments, you pretty much need a working model of the mind, and a model of reality to compare the output against. i.e. if you already have a way to fact check the LLM output and reliably detect a hallucination, then you already have the thing you are looking to try to build via a dream cycle. We just don't know enough to intentionally build this from scratch, and are more likely to stumble across a working approach via serendipity.

The problem right now is that the hallucinations come out of weak connections between symbolic locations in the mathematical space that aren't intended, plus injection of noise to cause the model to sometimes jump down those weak connections ("inspiration", avoiding the median, whatever you want to call it).

I still think that anything remotely approaching AGI is going to be a conglomeration of different systems and models providing different functionality, much like our own brain. I still find it weird that our attempts to build these sort of guardrails are all based around additional LLM invocations to mimic these different systems.

rdrr

Elite Member

- Joined

- Sep 9, 2020

- Posts

- 2,323

- Main Camera

- Sony

Did they figure out "middle out" ah-la Silicon Valley? 800Maybe some good news for a change.

Because of AI facial identification and shoddy police work, a woman spent more than five months in prison for committing fraud in a state she had never visited.

https://www.cnn.com/2026/03/29/us/angela-lipps-ai-facial-recognition

https://www.cnn.com/2026/03/29/us/angela-lipps-ai-facial-recognition

Eric

Mama's lil stinker

- Joined

- Aug 10, 2020

- Posts

- 15,720

- Solutions

- 18

- Main Camera

- Sony

Makes you wonder how this will impact the world of software developers.

finance.yahoo.com

finance.yahoo.com

Oracle fired up to 30,000 workers via email after a 95% profit surge. Tech companies are cutting almost 1,000 jobs/day

It’s unclear exactly how many employees lost their jobs, with reports ranging from 10,000 to 30,000. The latter would represent almost 19% of the company’s 162,000 workers.

Oracle fired up to 30,000 workers via email after a 95% profit surge. Tech companies are cutting almost 1,000 jobs/day

- Joined

- May 10, 2022

- Posts

- 1,712

- Main Camera

- Sony

Everything will be in the “hands” of AI and it will work perfectlyMakes you wonder how this will impact the world of software developers.

Oracle fired up to 30,000 workers via email after a 95% profit surge. Tech companies are cutting almost 1,000 jobs/day

It’s unclear exactly how many employees lost their jobs, with reports ranging from 10,000 to 30,000. The latter would represent almost 19% of the company’s 162,000 workers.finance.yahoo.com

Oracle fired up to 30,000 workers via email after a 95% profit surge. Tech companies are cutting almost 1,000 jobs/day

Ellison needs to focus on his new acquisitions/toys

Similar threads

- Replies

- 7

- Views

- 569