Decided to make a thread for this one, since it’s a pretty big release and should be intel trying to get closer to others on battery life.

www.notebookcheck.net

www.notebookcheck.net

This is an old leak but interesting. Basically, they’re going to be able to match the M1 GPU perf/W but not much more. Is that good? Yes and no, depends on perspective.

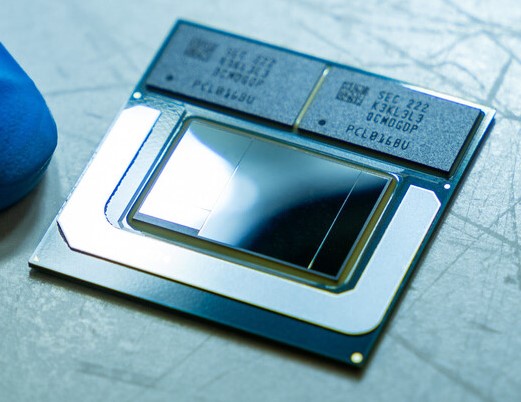

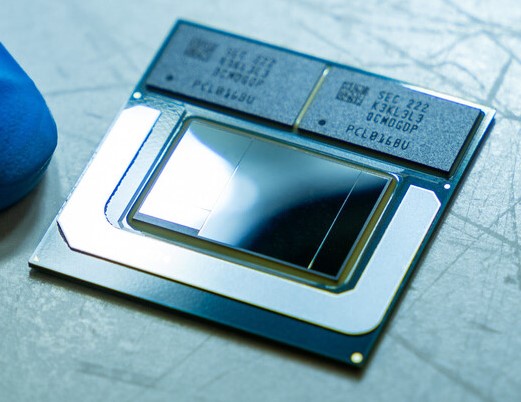

Massive leak details Intel Lunar Lake MX architecture releasing late 2024

Intel's upcoming Lunar Lake MX processors will come in Core 5 and Core 7 flavors with 4 P-cores and 4 E-cores and up to 8 Xe2-LPG Battlemage graphics cores that can almost match Apple's M2 iGPU performance. The entry-level 8 W SKU will not require active cooling.

www.notebookcheck.net

www.notebookcheck.net

This is an old leak but interesting. Basically, they’re going to be able to match the M1 GPU perf/W but not much more. Is that good? Yes and no, depends on perspective.